It addresses one of the most difficult shifts teams face in software development: breaking the habit of treating testing as an activity that happens after coding.

For many organizations, that separation between development and testing is deeply embedded in organizational structures, role definitions, and processes.

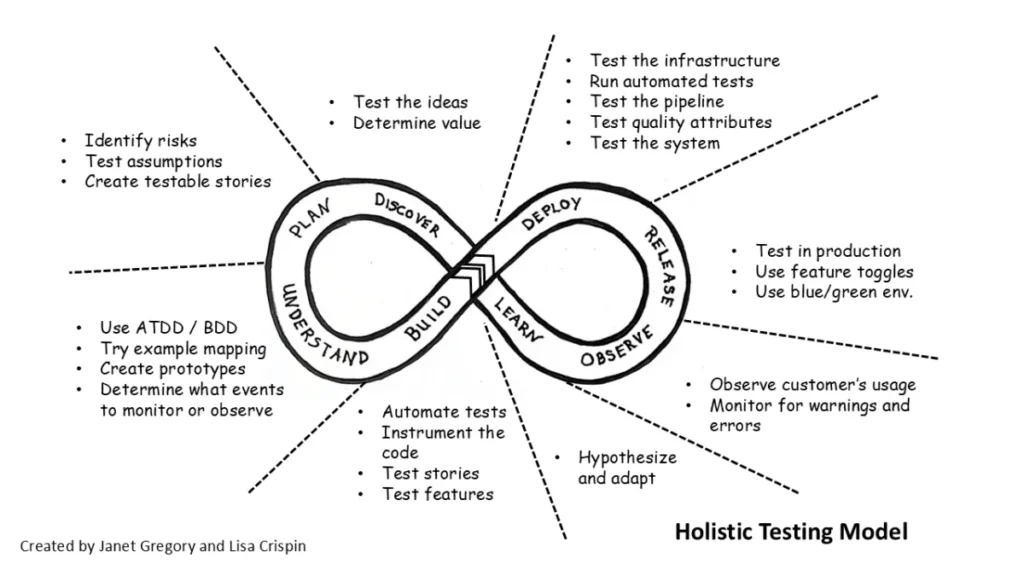

The Holistic Testing Model, developed by Janet Gregory and Lisa Crispin, helps teams break down that separation. It frames quality as an intentional approach that begins with early business value discussions and continues throughout delivery and continuous learning.

Instead of asking, “Did we test it?”, the model encourages deeper questions:

- How are we preventing misunderstandings?

- How are we reducing risk early?

- How are we validating outcomes?

- How are we learning from real usage?

- How are we strengthening shared responsibility?

This approach makes quality practices visible across the lifecycle, connecting business needs, engineering practices, and feedback loops.

The Approach of the Holistic Testing Model

The model was inspired in part by Dan Ashby’s “We test here” visualization and evolved from the agile testing mindset, extending its emphasis on collaboration and continuous feedback beyond iterations. It makes explicit that testing and quality practices are present throughout the lifecycle, including examples that teams can apply at different phases of the lifecycle.

These practices are neither prescriptive nor exhaustive. The goal is not for teams to apply every practice, but for each team to adopt those that make sense based on their context, system complexity, risks, and organizational constraints. The model provides a shared reference point for discussing how quality is built and validated throughout the lifecycle.

On the left side of the loop, teams focus on clarifying expectations, making risks visible, and building a shared understanding of the system to be developed to guide development and prevent defects early.

On the right side, the focus shifts to evaluating what has already been built. Here, teams validate system behavior, identify defects that escaped during development, and learn from real usage in production. Feedback from deployment and production informs future iterations.

The balance between prevention, validation, and learning is what makes the approach holistic.

Prevention: Designing Quality Before Code Exists

During the discover, plan, and understand phases, teams focus on activities such as:

- Testing ideas and assumptions

- Identifying risks before implementation

- Slicing features into small, testable stories

- Using concrete examples to clarify expectations

- Defining quality attributes relevant to their context, such as performance, reliability, and security

Practices such as ATDD, BDD, and TDD function as collaborative approaches that clarify expectations before implementation.

These types of activities help teams build a shared understanding of what they intend to build, leading to benefits such as:

- Reduced story rejection

- Reduced rework

- Shorter feedback cycles

- Increased confidence

Validation: Continuous Feedback Across the Lifecycle

Prevention builds alignment and reduces risk. Validation focuses on evaluating what has already been built, ensuring it works as expected under real conditions and identifying defects that escaped during development. Both aspects are essential, which is why the right side of the loop is equally important.

During the deploy, release, observe, and learn phases, teams validate system behavior and learn from how it is used in production.

Validation relies on practices such as:

- Automated regression suites designed with purpose

- Deployment pipelines that prioritize fast feedback

- Quality attribute testing in appropriate environments

- Exploratory testing to uncover unanticipated scenarios

- Safe release strategies such as feature toggles

- Monitoring and observability practices

Observability is particularly important. It shifts the conversation from reacting to defects to understanding system behavior in production.

Learning From Production

Quality and testing practices don’t end after release. The production environment is also a space for generating insights that guide future decisions.

This learning is supported by:

- System monitoring and observability

- Usage analytics

- Hypothesis-driven experiments

Information gathered in production influences future discovery phases, risk prioritization, feature refinement, and improvements to system architecture. In this way, improvement decisions are based on real data rather than assumptions.

Quality as a Shared Responsibility

A core principle of this approach is that quality cannot be delegated to a specific role or department. It is a shared responsibility of the entire team.

Each role contributes from its expertise:

- Business representatives contributing to clarifying purpose and validating delivered value

- Developers designing for testability

- Testers facilitating risk exploration and promoting testing practices throughout the process

- UX professionals representing user needs

- Leadership fostering psychological safety

The tester role is not conceived as a gatekeeper or quality police, but as a facilitator and advocate for quality and testing practices throughout the process.

Aligning Process Quality and System Quality

One of the most important distinctions in the Holistic Testing Model is the difference between process quality and system quality.

Process quality focuses on how we build:

- Coding standards

- Automation coverage

- Continuous integration

- Definition of Done

System quality focuses on what users experience:

- Usability

- Performance

- Reliability

- Accessibility

- Business value

Both dimensions are necessary and complementary. Internal process discipline does not automatically translate into external value. Teams that optimize only internal metrics may lose sight of user outcomes. Conversely, teams that focus exclusively on the external experience may accumulate hidden technical debt.

Sustainable quality depends on a deliberate balance between internal discipline and external value. The Holistic Testing Model makes this need for alignment explicit throughout the lifecycle.

Why the Holistic Testing Model Matters

Today’s systems, whether new or legacy, often evolve continuously and integrate multiple components and dependencies. Linear testing strategies struggle to respond effectively to this level of complexity and change.

The Holistic Testing Model makes quality practices visible and turns them into an explicit topic of conversation, helping teams to:

- Build quality in

- Create fast feedback loops

- Reduce systemic risk

- Strengthen collaboration

- Enable continuous improvement

Frequently Asked Questions About the Holistic Testing Model

What is the Holistic Testing Model?

How does the Holistic Testing Model improve quality outcomes?

Is it the same as agile testing?

Why is it relevant for modern systems?

Why should technology leaders care?

What are the common barriers to adopting it?

Evaluate the Maturity of Your Software Quality Strategy

Sustainable quality requires more than isolated testing efforts. Our Software Quality Maturity Assessment helps you analyze how your team balances prevention, validation, and learning to identify the next steps to strengthen your strategy.